Bulk load methodĭiscussion of the bulk load performance test results

The tests were run on my Laptop with Fedora Linux 37, untuned PostgreSQL v16 with local domain socket connections and ext4 file systems on an NVMe disk. Performance comparison of the bulk load methods That won’t speed up loading, but it avoids the overhead of setting hint bits on the rows by the first reader and anti-wraparound autovacuum on the table. Note that you can use COPY (FREEZE) if you create or truncate the table in the same transaction. The down side of COPY is that it is a non-standard SQL statement, so not all APIs support it, and you cannot use it in programs that are to support other database systems as well. Let’s see how much better it really is! For this test, I’ll load all 10 million rows with a single COPY statement. It is well known that COPY is the fastest way to load data into PostgreSQL. The idea is to combine the benefits of prepared statements and multi-line INSERTs. PREPARE stmt(bigint,text,bigint,text.) AS This test is like the previous one, except that I’ll use a prepared statement: Multi-line INSERTs with a prepared statement in one transaction The benefit is that there are fewer client-server round trips, and PostgreSQL has to plan and execute fewer statements. You can insert several rows with a single INSERT statement:įor this test, I’ll insert 1000 rows per statement, so there will be 10000 such INSERT statements. With short statements, that can be a notable performance improvement. The performance should be better, because PostgreSQL can reuse the execution plan for the INSERT statement rather than planning it 10 million times. INSERT INTO instest (id, value) VALUES ($1, $2) The difference to the previous test is that we use a prepared statement: Single INSERTs with a prepared statement in one transaction That is bound to boost performance quite a lot. The only difference to the previous test is that we will insert all 10 million rows in a single transaction. The effect won’t be as marked as with synchronous_commit, but you can never lose a committed transaction. That can reduce the number of I/O requests. Set commit_delay to a value greater than 0 and tune commit_siblings.

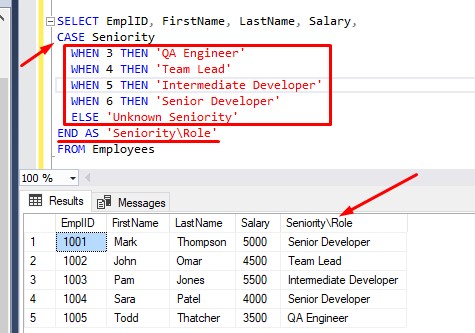

That will boost performance amazingly, but an operating system crash could lead to some committed transactions getting lost. There are some remedies available to boost performance in such a case: But it is the way a transactional application loads data into the database, with many clients inserting small amounts of data, and no way to bundle the individual requests into bigger transactions. Naturally, this is not the correct thing to do for a bulk load. For each transaction, PostgreSQL has to write the WAL (the transaction log) out to disk, which leads to 10 million I/O requests. This will be terribly slow, since each statement will run in its own transaction. I’ll try the following six techniques: Single INSERTs in autocommit mode (the fool’s way) I am aware that the default configuration is not perfect for bulk loading, but that does not bother me, since I am only interested in comparing the different methods. The test will be performed on PostgreSQL v16 with the default configuration. Moreover, dropping indexes before the bulk load and re-creating them afterwards can be slower, if the table already contains data.įor the performance test, I’m going to load 10 million rows that look like (1, '1'), (2, '2') and so on, counting up to 10000000. Loading data would be much faster without the index, but in real life you cannot always drop all indexes and constraints before loading data. It is a narrow table (only two columns), but it has a primary key index. I’ll add some recommendations for parameter settings to improve the performance even more. I decided to compare their performance in a simple test case. If we perform SELECT * FROM tbl WHERE type = 'Student' AND status = 't' we would only get the following result, we won't be able to fetch Employees tblĪnd performing SELECT * FROM tbl WHERE Status = 't' we would only get the following result, we got an Employee Row on the result but there are Employee Rows that were not included on the result set, one could argue that performing IN might work, however, it will give the same result set.There are several techniques to bulk load data into PostgreSQL. Let us assume this is the structure of our table tblĪnd we want to select all Student rows, that have Status = 't', however, We also like to retrieve all Employee rows regardless of its Status. Thanks to sagi, I've come up with the following query, but I'd like to give a test case as well. The accepted answer works, but I'd like to share input for those who are looking for a different answer.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed